WoT-AI: How Thing Description Quality Shapes LLM-Based IoT Agents

Project Overview

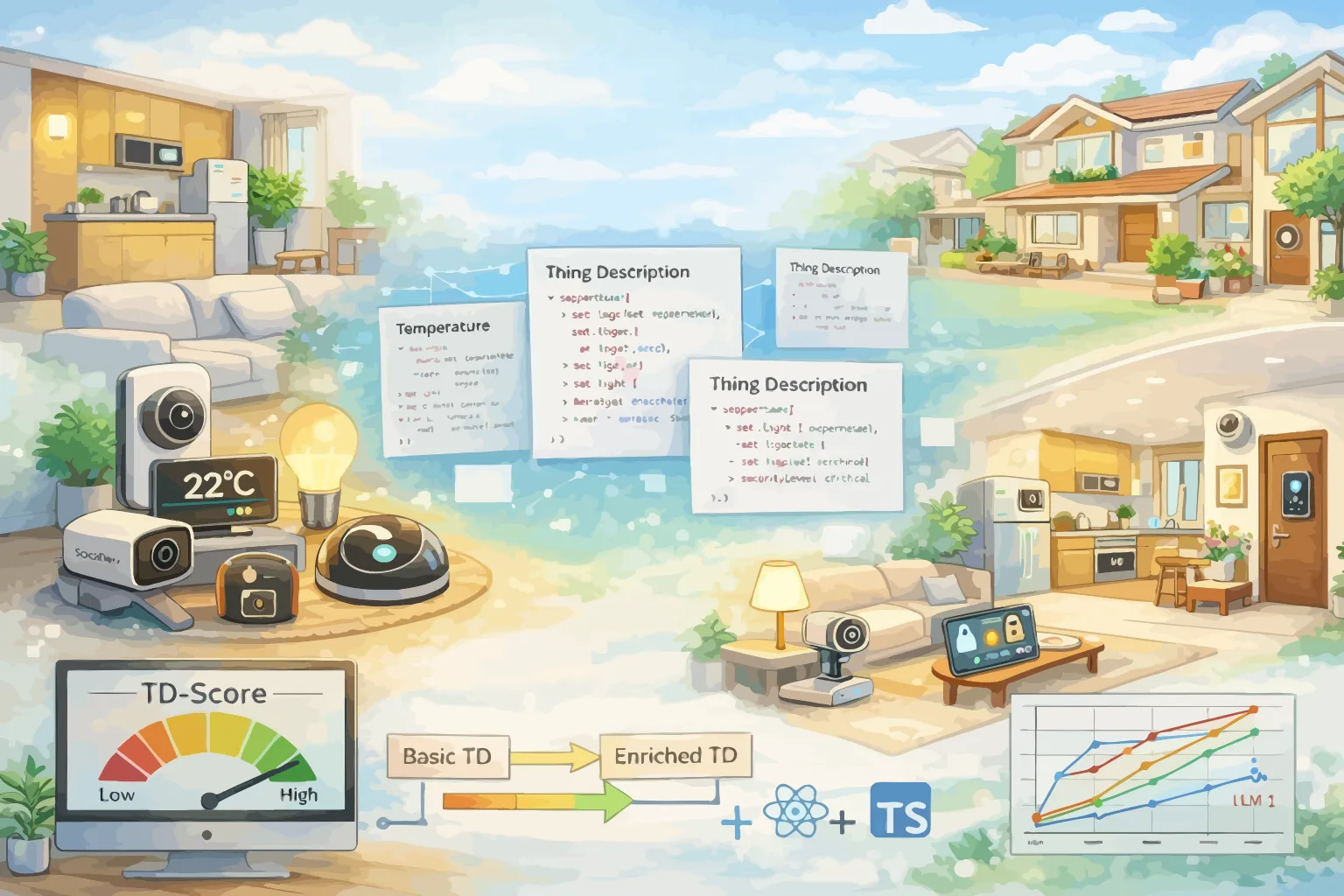

The W3C Web of Things (WoT) framework provides a standardised way to describe IoT devices through Thing Descriptions (TDs) — machine-readable metadata documents that specify a device's properties, actions, events, and security requirements. As Large Language Models are increasingly used to build autonomous IoT agents that interpret these descriptions and interact with devices, a critical question arises: how does the quality and expressiveness of a Thing Description affect an LLM agent's ability to understand and control IoT devices correctly? This project addresses that question through a systematic research programme. The WoT Agent Research Platform provides an experimental environment combining a FastAPI backend, a React/TypeScript frontend, virtual WoT devices, and LLM-powered agents to run controlled experiments across multiple TD variants. The platform simulates a smart home environment with diverse device types — from lighting and climate control to security systems and appliances — each described by Thing Descriptions at varying levels of expressiveness, from minimal schemas to richly annotated documents with semantic context, safety constraints, and interaction guidance. A central contribution is the systematic evaluation of how TD features impact agent performance. The research compares multiple LLM models across TD variants that differ in semantic richness, interaction detail, safety metadata, and contextual annotations. Results from large-scale experiments reveal which TD features matter most for agent comprehension and which models are most sensitive to description quality. The work produces actionable findings for both TD authors seeking to write better descriptions and agent developers building more robust IoT systems. The project also contributes practical tools to the WoT ecosystem. TD-Score evaluates the quality of existing Thing Descriptions across multiple dimensions, providing a quantitative assessment of expressiveness. TD-Enrich uses LLMs to automatically augment minimal Thing Descriptions with richer metadata, bridging the gap between the sparse descriptions commonly found in practice and the detailed annotations that agents need. Both tools, along with interactive tutorials and educational materials, are designed to support the broader WoT community in producing more reliable, teachable IoT systems.

The WoT Agent Research Platform is an experimental environment for studying how AI agents interpret and act on W3C Web of Things Thing Descriptions. It combines a backend server, a web frontend, virtual WoT devices, and an LLM-powered agent to run controlled experiments across multiple TD variants.

Thing Description Variants. Each virtual device in the platform is described by multiple TD variants ranging from minimal schemas to richly annotated documents. Minimal TDs contain only the basic property/action/event structure required by the WoT specification. Baseline TDs add standard metadata such as titles, descriptions, and units. Enhanced TDs include semantic annotations, safety constraints, interaction guidance, value ranges, and contextual information. By comparing agent performance across these variants, the research isolates which TD features have the greatest impact on agent comprehension.

Experiment Framework. The platform supports systematic experimentation across multiple dimensions: TD variant, LLM model, device type, and task complexity. Experiments are defined as structured scenarios that specify a natural language goal, the target device and TD variant, and expected outcomes. The system automatically executes experiments, records agent actions, and computes success metrics including task completion rate, action accuracy, and feature attribution scores that identify which TD elements the agent attended to.

TD-Score and TD-Enrich. TD-Score provides a quantitative assessment of Thing Description quality across dimensions including semantic richness, interaction completeness, safety coverage, and metadata quality. TD-Enrich leverages LLMs to automatically augment sparse Thing Descriptions with richer annotations, producing enhanced TDs that improve agent performance without requiring manual authoring effort. Together these tools address a practical gap: most real-world TDs are minimally specified, yet agents benefit significantly from richer descriptions.

Tutorials and Community. The project produces interactive tutorials and educational materials demonstrating how WoT standards support LLM-based IoT applications. These resources are shared through the W3C Web of Things community group, contributing to meetups, WoT Week events, and the growing community of developers exploring the intersection of web standards and AI-powered device interaction.

Team