CASPER+ Plus for Pattern of Life

Project Overview

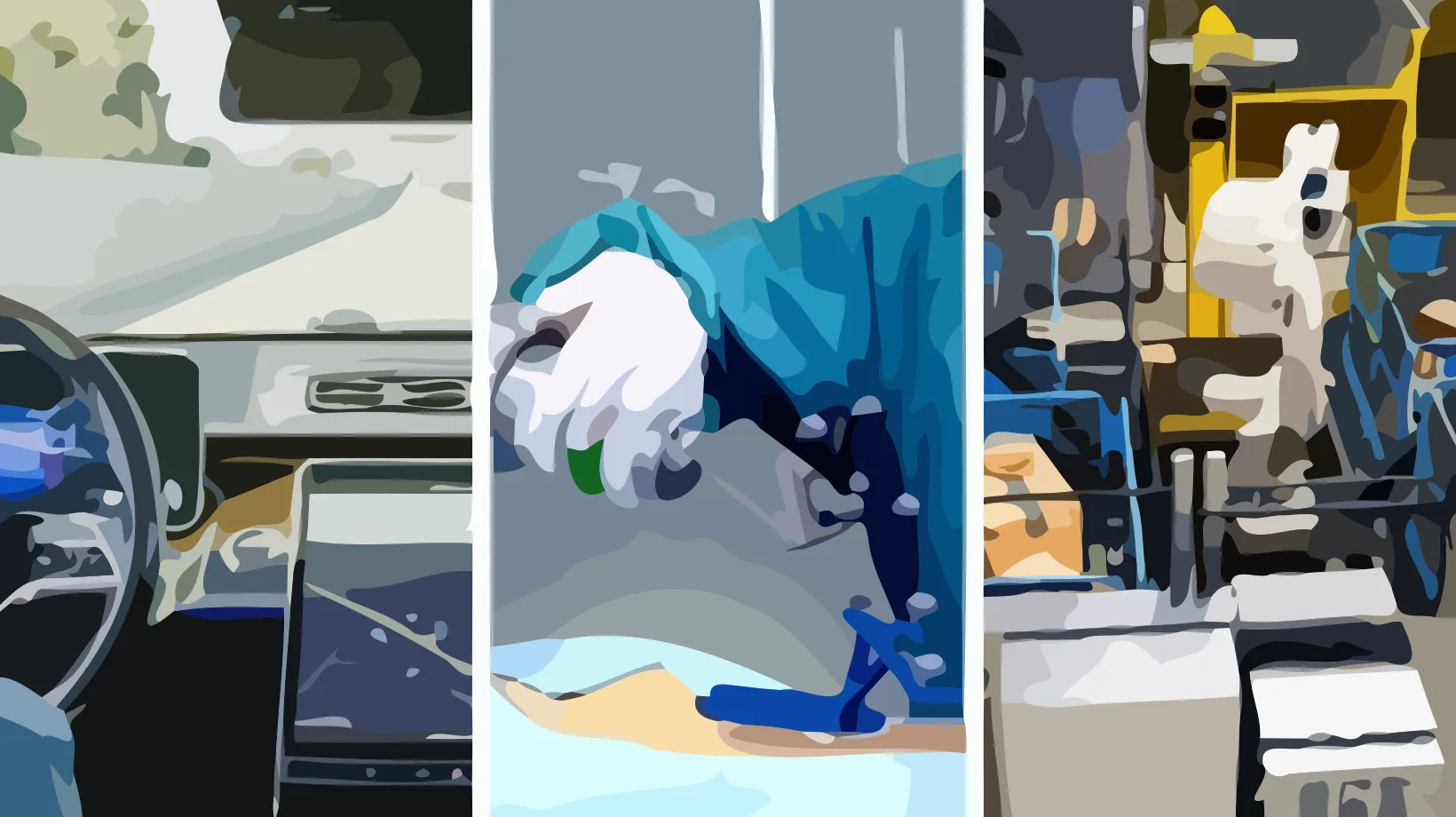

Industrial collaborative robots are increasingly deployed in manufacturing environments where they perform repetitive tasks such as screwdriving, painting, and pick-and-place operations. Ensuring the safety and reliability of these systems demands continuous monitoring capable of detecting not only the presence of anomalies but also their nature, severity, and root cause. CASPER+Plus extends the foundational CASPER work on context-aware anomaly detection for industrial robotic arms — published in ACM Transactions on Internet of Things (2024) and IEEE Internet of Things Journal (2025) — into a comprehensive suite of studies that push the boundaries of what a single, externally-mounted inertial measurement unit can reveal about the operational state of a collaborative robot. The program is unified by a central premise: a low-cost IMU sensor clamped to the exterior of a robotic arm contains far richer information than binary anomaly labels. Funded by an NVIDIA Academic Grant providing an RTX PRO 6000 Blackwell workstation and four Jetson Orin AGX developer kits, the project leverages GPU-accelerated computing for real-time edge inference on industrial sensor streams.

The program investigates five interrelated research directions using a single, extensively characterised dataset comprising 26 hours of continuous UR3e operation across three manufacturing tasks, with anomalies injected at eight precisely controlled severity levels and five distinct physical disruption types.

Objective 1: Unsupervised Task Segmentation and Discovery. The first direction addresses whether raw IMU signals alone can reveal the structure of a robot’s work. By applying unsupervised temporal segmentation methods — including hidden Markov models, Bayesian change-point detection, and neural temporal clustering — directly to the IMU stream, the goal is to automatically discover distinct tasks and individual motion primitives without access to any labels or robot controller data. This establishes a new paradigm for plug-and-play monitoring where a sensor can be attached to an unfamiliar robot and immediately begin building a structured understanding of its behaviour.

Objective 2: Anomaly Severity Estimation. The second direction challenges the dominant binary framing of anomaly detection. The dataset uniquely supports this investigation because anomalies were injected at eight known severity levels ranging from minus fifty percent to plus one hundred percent of nominal joint velocity. The work develops regression models that output a continuous severity score mapping to the physical magnitude of deviation, producing the first benchmark for severity-graded anomaly detection on industrial sensor time series.

Objective 3: Cold-Start Pattern-of-Life Establishment. The third direction investigates how many operational cycles must be observed before anomaly detection becomes reliable when a sensor is attached to a new robot. This includes evaluation of time-series foundation models such as TimesFM and Chronos in zero-shot and few-shot settings, testing whether pre-trained models can provide useful anomaly detection before any task-specific data is collected.

Objective 4: External IMU to Robot State Estimation. The fourth direction poses an inverse problem: can the external IMU signal be used to reconstruct the robot’s internal joint positions, velocities, and end-effector trajectory? This would allow an independent, tamper-resistant estimate of the robot’s kinematic state using only a commodity sensor.

Objective 5: Cross-Modal Fusion and Spoofing Detection. The fifth direction builds on state estimation to address a security-critical question. Disagreement between the external IMU estimate and the robot’s own reported telemetry becomes a powerful indicator of data spoofing or sensor manipulation. Fusion architectures combine external IMU data with robot telemetry when both are trustworthy, while simultaneously monitoring cross-modal consistency as a spoofing detection mechanism.

Team